57. LQ Control: Foundations#

Contents

In addition to what’s in Anaconda, this lecture will need the following libraries:

!pip install quantecon

Show code cell output

Requirement already satisfied: quantecon in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (0.8.0)

Requirement already satisfied: numba>=0.49.0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (0.60.0)

Requirement already satisfied: numpy>=1.17.0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (1.26.4)

Requirement already satisfied: requests in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (2.32.3)

Requirement already satisfied: scipy>=1.5.0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (1.13.1)

Requirement already satisfied: sympy in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from quantecon) (1.13.3)

Requirement already satisfied: llvmlite<0.44,>=0.43.0dev0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from numba>=0.49.0->quantecon) (0.43.0)

Requirement already satisfied: charset-normalizer<4,>=2 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from requests->quantecon) (3.3.2)

Requirement already satisfied: idna<4,>=2.5 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from requests->quantecon) (3.7)

Requirement already satisfied: urllib3<3,>=1.21.1 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from requests->quantecon) (2.2.3)

Requirement already satisfied: certifi>=2017.4.17 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from requests->quantecon) (2024.8.30)

Requirement already satisfied: mpmath<1.4,>=1.1.0 in /home/runner/miniconda3/envs/quantecon/lib/python3.12/site-packages (from sympy->quantecon) (1.3.0)

57.1. Overview#

Linear quadratic (LQ) control refers to a class of dynamic optimization problems that have found applications in almost every scientific field.

This lecture provides an introduction to LQ control and its economic applications.

As we will see, LQ systems have a simple structure that makes them an excellent workhorse for a wide variety of economic problems.

Moreover, while the linear-quadratic structure is restrictive, it is in fact far more flexible than it may appear initially.

These themes appear repeatedly below.

Mathematically, LQ control problems are closely related to the Kalman filter

Recursive formulations of linear-quadratic control problems and Kalman filtering problems both involve matrix Riccati equations.

Classical formulations of linear control and linear filtering problems make use of similar matrix decompositions (see for example this lecture and this lecture).

In reading what follows, it will be useful to have some familiarity with

matrix manipulations

vectors of random variables

dynamic programming and the Bellman equation (see for example this lecture and this lecture)

For additional reading on LQ control, see, for example,

[Ljungqvist and Sargent, 2018], chapter 5

[Hansen and Sargent, 2008], chapter 4

[Hernandez-Lerma and Lasserre, 1996], section 3.5

In order to focus on computation, we leave longer proofs to these sources (while trying to provide as much intuition as possible).

Let’s start with some imports:

import matplotlib.pyplot as plt

plt.rcParams["figure.figsize"] = (11, 5) #set default figure size

import numpy as np

from quantecon import LQ

57.2. Introduction#

The “linear” part of LQ is a linear law of motion for the state, while the “quadratic” part refers to preferences.

Let’s begin with the former, move on to the latter, and then put them together into an optimization problem.

57.2.1. The Law of Motion#

Let

Suppose that

Here

Regarding the dimensions

57.2.1.1. Example 1#

Consider a household budget constraint given by

Here

If we suppose that

This is clearly a special case of (57.1), with assets being the state and consumption being the control.

57.2.1.2. Example 2#

One unrealistic feature of the previous model is that non-financial income has a zero mean and is often negative.

This can easily be overcome by adding a sufficiently large mean.

Hence in this example, we take

Another alteration that’s useful to introduce (we’ll see why soon) is to

change the control variable from consumption

to the deviation of consumption from some “ideal” quantity

(Most parameterizations will be such that

For this reason, we now take our control to be

In terms of these variables, the budget constraint

How can we write this new system in the form of equation (57.1)?

If, as in the previous example, we take

This means that we are dealing with an affine function, not a linear one (recall this discussion).

Fortunately, we can easily circumvent this problem by adding an extra state variable.

In particular, if we write

then the first row is equivalent to (57.2).

Moreover, the model is now linear and can be written in the form of (57.1) by setting

In effect, we’ve bought ourselves linearity by adding another state.

57.2.2. Preferences#

In the LQ model, the aim is to minimize flow of losses, where time-

Here

Note

In fact, for many economic problems, the definiteness conditions on

57.2.2.1. Example 1#

A very simple example that satisfies these assumptions is to take

Thus, for both the state and the control, loss is measured as squared distance from the origin.

(In fact, the general case (57.5) can also be understood in this

way, but with

Intuitively, we can often think of the state

deviation of inflation from some target level

deviation of a firm’s capital stock from some desired quantity

The aim is to put the state close to the target, while using controls parsimoniously.

57.2.2.2. Example 2#

In the household problem studied above, setting

Under this specification, the household’s current loss is the squared deviation of consumption from the ideal level

57.3. Optimality – Finite Horizon#

Let’s now be precise about the optimization problem we wish to consider, and look at how to solve it.

57.3.1. The Objective#

We will begin with the finite horizon case, with terminal time

In this case, the aim is to choose a sequence of controls

subject to the law of motion (57.1) and initial state

The new objects introduced here are

The scalar

Comments:

We assume

We allow

57.3.2. Information#

There’s one constraint we’ve neglected to mention so far, which is that the decision-maker who solves this LQ problem knows only the present and the past, not the future.

To clarify this point, consider the sequence of controls

When choosing these controls, the decision-maker is permitted to take into account the effects of the shocks

However, it is typically assumed — and will be assumed here — that the

time-

The fancy measure-theoretic way of saying this is that

This is in fact equivalent to stating that

(Just about every function that’s useful for applications is Borel measurable,

so, for the purposes of intuition, you can read that last phrase as “for some function

Now note that

In fact, it turns out that

More precisely, it can be shown that any optimal control

Hence in what follows we restrict attention to control policies (i.e., functions) of the form

Actually, the preceding discussion applies to all standard dynamic programming problems.

What’s special about the LQ case is that – as we shall soon see — the optimal

57.3.3. Solution#

To solve the finite horizon LQ problem we can use a dynamic programming strategy based on backward induction that is conceptually similar to the approach adopted in this lecture.

For reasons that will soon become clear, we first introduce the notation

Now consider the problem of the decision-maker in the second to last period.

In particular, let the time be

The decision-maker must trade-off current and (discounted) final losses, and hence solves

At this stage, it is convenient to define the function

The function

Now let’s step back to

For a decision-maker at

That is,

The decision-maker chooses her control

the next period state is

the “cost” of landing in state

Her problem is therefore

Letting

the pattern for backward induction is now clear.

In particular, we define a sequence of value functions

The first equality is the Bellman equation from dynamic programming theory specialized to the finite horizon LQ problem.

Now that we have

As a first step, let’s find out what the value functions look like.

It turns out that every

We can show this by induction, starting from

Using this notation, (57.7) becomes

To obtain the minimizer, we can take the derivative of the r.h.s. with respect to

Applying the relevant rules of matrix calculus, this gives

Plugging this back into (57.8) and rearranging yields

where

and

(The algebra is a good exercise — we’ll leave it up to you.)

If we continue working backwards in this manner, it soon becomes clear that

and

Recalling (57.9), the minimizers from these backward steps are

These are the linear optimal control policies we discussed above.

In particular, the sequence of controls given by (57.14) and (57.1) solves our finite horizon LQ problem.

Rephrasing this more precisely, the sequence

for

57.4. Implementation#

We will use code from lqcontrol.py in QuantEcon.py to solve finite and infinite horizon linear quadratic control problems.

In the module, the various updating, simulation and fixed point methods

are wrapped in a class called LQ, which includes

Instance data:

The required parameters

set

Nonein the infinite horizon caseset

C = None(or zero) in the deterministic case

the value function and policy data

Methods:

57.4.1. An Application#

Early Keynesian models assumed that households have a constant marginal propensity to consume from current income.

Data contradicted the constancy of the marginal propensity to consume.

In response, Milton Friedman, Franco Modigliani and others built models based on a consumer’s preference for an intertemporally smooth consumption stream.

(See, for example, [Friedman, 1956] or [Modigliani and Brumberg, 1954].)

One property of those models is that households purchase and sell financial assets to make consumption streams smoother than income streams.

The household savings problem outlined above captures these ideas.

The optimization problem for the household is to choose a consumption sequence in order to minimize

subject to the sequence of budget constraints

Here

(Without such a constraint, the optimal choice is to choose

As before we set

We saw how this constraint could be manipulated into the LQ formulation

To match with this state and control, the objective function (57.16) can be written in the form of (57.6) by choosing

Now that the problem is expressed in LQ form, we can proceed to the solution by applying (57.12) and (57.14).

After generating shocks

The following figure was computed using

The shocks

# Model parameters

r = 0.05

β = 1/(1 + r)

T = 45

c_bar = 2

σ = 0.25

μ = 1

q = 1e6

# Formulate as an LQ problem

Q = 1

R = np.zeros((2, 2))

Rf = np.zeros((2, 2))

Rf[0, 0] = q

A = [[1 + r, -c_bar + μ],

[0, 1]]

B = [[-1],

[ 0]]

C = [[σ],

[0]]

# Compute solutions and simulate

lq = LQ(Q, R, A, B, C, beta=β, T=T, Rf=Rf)

x0 = (0, 1)

xp, up, wp = lq.compute_sequence(x0)

# Convert back to assets, consumption and income

assets = xp[0, :] # a_t

c = up.flatten() + c_bar # c_t

income = σ * wp[0, 1:] + μ # y_t

# Plot results

n_rows = 2

fig, axes = plt.subplots(n_rows, 1, figsize=(12, 10))

plt.subplots_adjust(hspace=0.5)

bbox = (0., 1.02, 1., .102)

legend_args = {'bbox_to_anchor': bbox, 'loc': 3, 'mode': 'expand'}

p_args = {'lw': 2, 'alpha': 0.7}

axes[0].plot(list(range(1, T+1)), income, 'g-', label="non-financial income",

**p_args)

axes[0].plot(list(range(T)), c, 'k-', label="consumption", **p_args)

axes[1].plot(list(range(1, T+1)), np.cumsum(income - μ), 'r-',

label="cumulative unanticipated income", **p_args)

axes[1].plot(list(range(T+1)), assets, 'b-', label="assets", **p_args)

axes[1].plot(list(range(T)), np.zeros(T), 'k-')

for ax in axes:

ax.grid()

ax.set_xlabel('Time')

ax.legend(ncol=2, **legend_args)

plt.show()

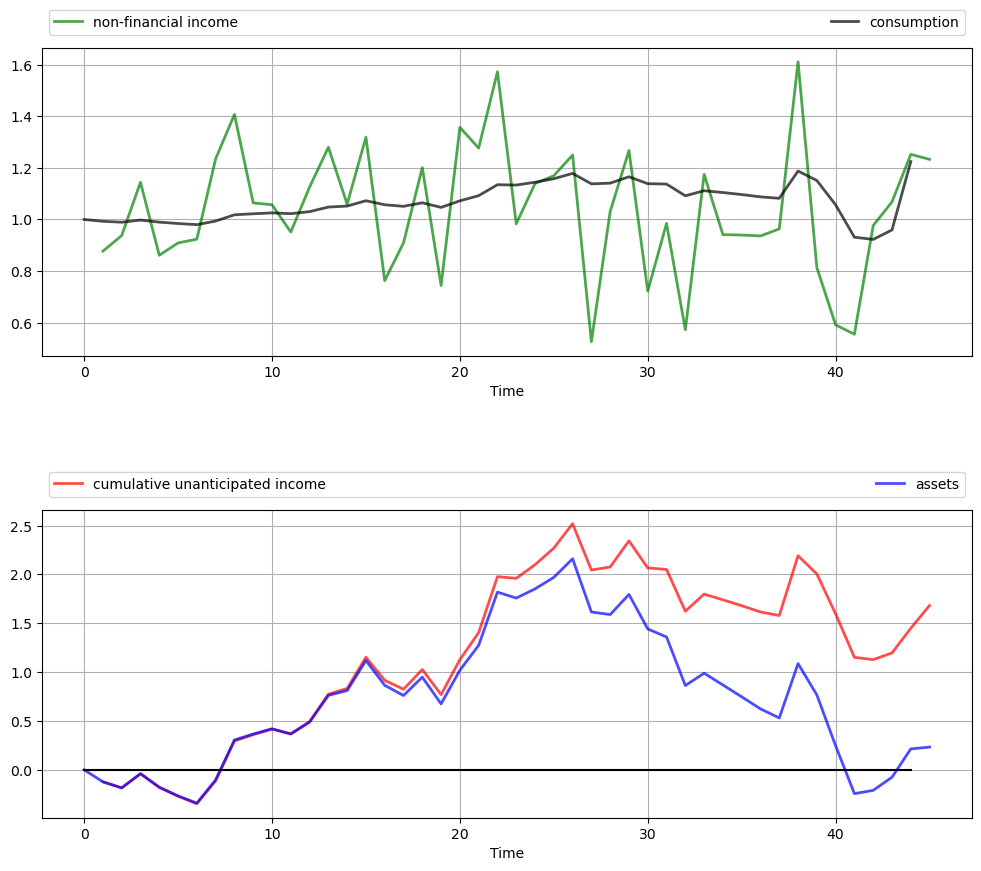

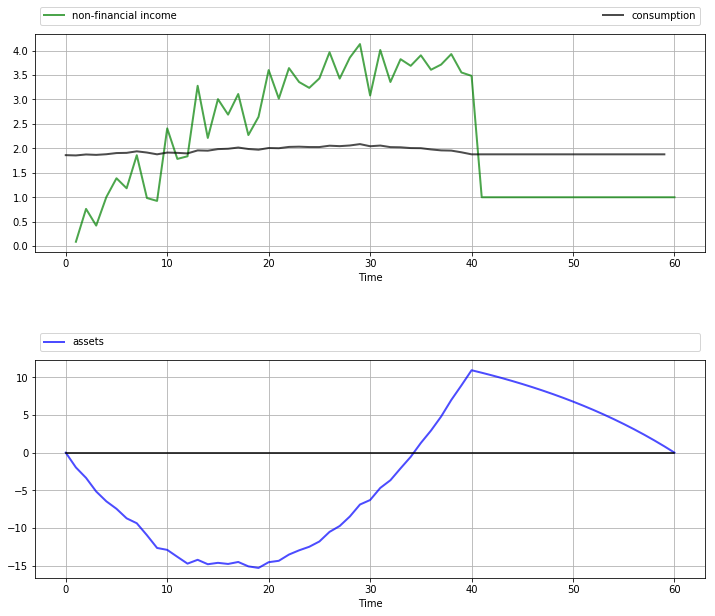

The top panel shows the time path of consumption

As anticipated by the discussion on consumption smoothing, the time path of consumption is much smoother than that for income.

(But note that consumption becomes more irregular towards the end of life, when the zero final asset requirement impinges more on consumption choices.)

The second panel in the figure shows that the time path of assets

A key message is that unanticipated windfall gains are saved rather than consumed, while unanticipated negative shocks are met by reducing assets.

(Again, this relationship breaks down towards the end of life due to the zero final asset requirement.)

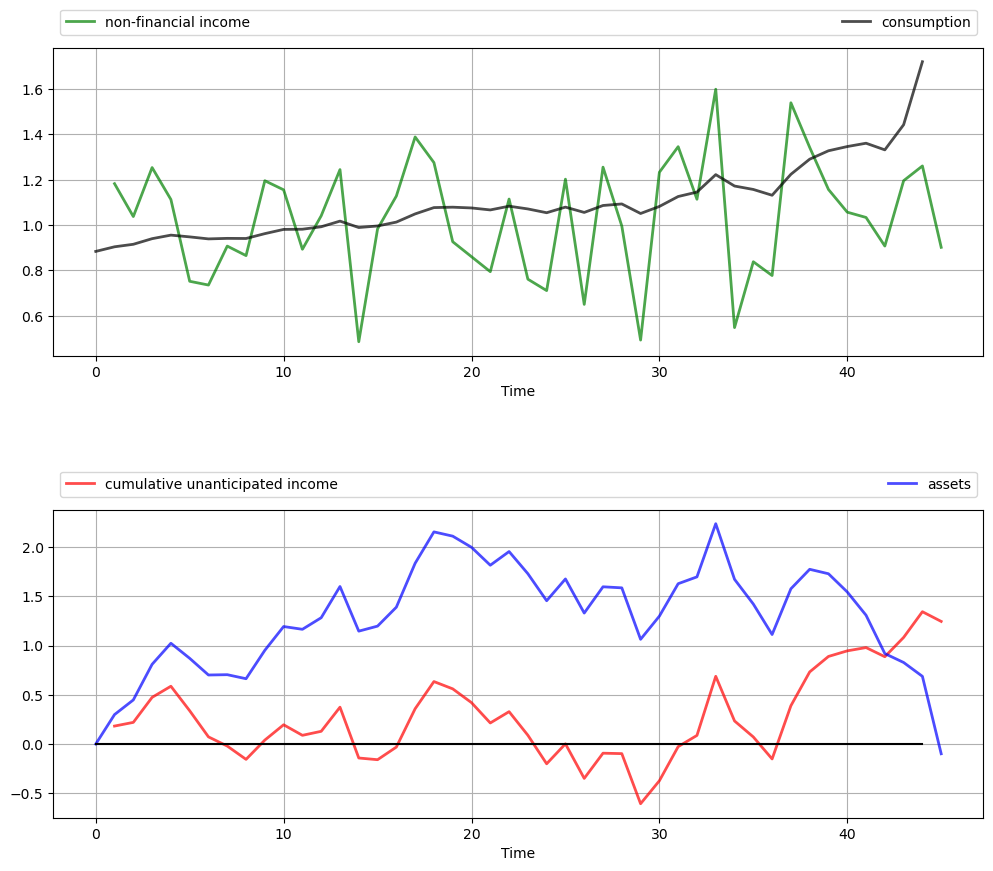

These results are relatively robust to changes in parameters.

For example, let’s increase

This consumer is slightly more patient than the last one, and hence puts relatively more weight on later consumption values.

# Compute solutions and simulate

lq = LQ(Q, R, A, B, C, beta=0.96, T=T, Rf=Rf)

x0 = (0, 1)

xp, up, wp = lq.compute_sequence(x0)

# Convert back to assets, consumption and income

assets = xp[0, :] # a_t

c = up.flatten() + c_bar # c_t

income = σ * wp[0, 1:] + μ # y_t

# Plot results

n_rows = 2

fig, axes = plt.subplots(n_rows, 1, figsize=(12, 10))

plt.subplots_adjust(hspace=0.5)

bbox = (0., 1.02, 1., .102)

legend_args = {'bbox_to_anchor': bbox, 'loc': 3, 'mode': 'expand'}

p_args = {'lw': 2, 'alpha': 0.7}

axes[0].plot(list(range(1, T+1)), income, 'g-', label="non-financial income",

**p_args)

axes[0].plot(list(range(T)), c, 'k-', label="consumption", **p_args)

axes[1].plot(list(range(1, T+1)), np.cumsum(income - μ), 'r-',

label="cumulative unanticipated income", **p_args)

axes[1].plot(list(range(T+1)), assets, 'b-', label="assets", **p_args)

axes[1].plot(list(range(T)), np.zeros(T), 'k-')

for ax in axes:

ax.grid()

ax.set_xlabel('Time')

ax.legend(ncol=2, **legend_args)

plt.show()

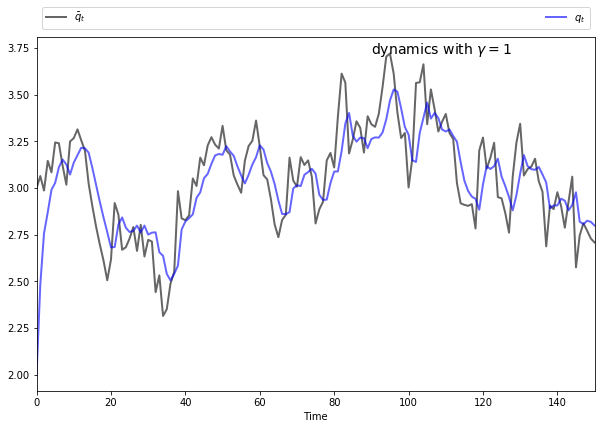

We now have a slowly rising consumption stream and a hump-shaped build-up of assets in the middle periods to fund rising consumption.

However, the essential features are the same: consumption is smooth relative to income, and assets are strongly positively correlated with cumulative unanticipated income.

57.5. Extensions and Comments#

Let’s now consider a number of standard extensions to the LQ problem treated above.

57.5.1. Time-Varying Parameters#

In some settings, it can be desirable to allow

For the sake of simplicity, we’ve chosen not to treat this extension in our implementation given below.

However, the loss of generality is not as large as you might first imagine.

In fact, we can tackle many models with time-varying parameters by suitable choice of state variables.

One illustration is given below.

For further examples and a more systematic treatment, see [Hansen and Sargent, 2013], section 2.4.

57.5.2. Adding a Cross-Product Term#

In some LQ problems, preferences include a cross-product term

Our results extend to this case in a straightforward way.

The sequence

The policies in (57.14) are modified to

The sequence

We leave interested readers to confirm these results (the calculations are long but not overly difficult).

57.5.3. Infinite Horizon#

Finally, we consider the infinite horizon case, with cross-product term, unchanged dynamics and objective function given by

In the infinite horizon case, optimal policies can depend on time

only if time itself is a component of the state vector

In other words, there exists a fixed matrix

That decision rules are constant over time is intuitive — after all, the decision-maker faces the same infinite horizon at every stage, with only the current state changing.

Not surprisingly,

The stationary matrix

Equation (57.21) is also called the LQ Bellman equation, and the map

that sends a given

The stationary optimal policy for this model is

The sequence

The state evolves according to the time-homogeneous process

An example infinite horizon problem is treated below.

57.5.4. Certainty Equivalence#

Linear quadratic control problems of the class discussed above have the property of certainty equivalence.

By this, we mean that the optimal policy

This can be confirmed by inspecting (57.22) or (57.19).

It follows that we can ignore uncertainty when solving for optimal behavior, and plug it back in when examining optimal state dynamics.

57.6. Further Applications#

57.6.1. Application 1: Age-Dependent Income Process#

Previously we studied a permanent income model that generated consumption smoothing.

One unrealistic feature of that model is the assumption that the mean of the random income process does not depend on the consumer’s age.

A more realistic income profile is one that rises in early working life, peaks towards the middle and maybe declines toward the end of working life and falls more during retirement.

In this section, we will model this rise and fall as a symmetric inverted “U” using a polynomial in age.

As before, the consumer seeks to minimize

subject to

For income we now take

(In the next section we employ some tricks to implement a more sophisticated model.)

The coefficients

You can confirm that the specification

To put this into an LQ setting, consider the budget constraint, which becomes

The fact that

Once a good choice of state and control (recall

Thus, for the dynamics we set

If you expand the expression

To implement preference specification (57.24) we take

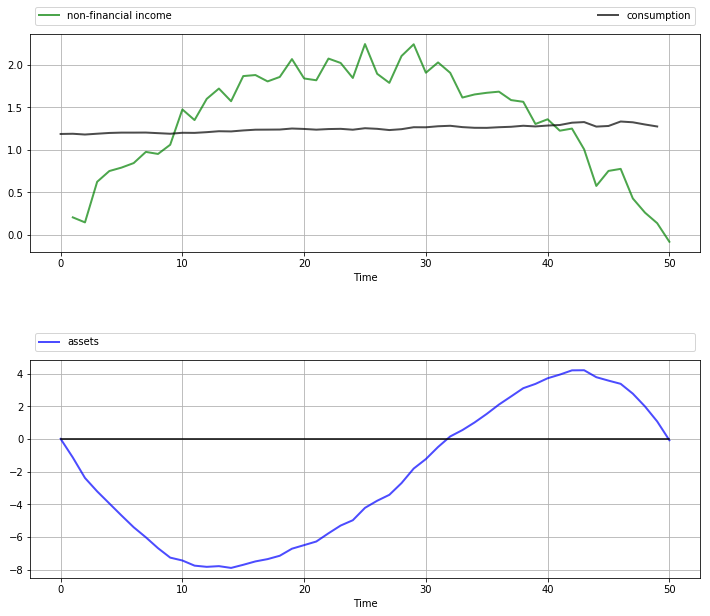

The next figure shows a simulation of consumption and assets computed using

the compute_sequence method of lqcontrol.py with initial assets set to zero.

Once again, smooth consumption is a dominant feature of the sample paths.

The asset path exhibits dynamics consistent with standard life cycle theory.

Exercise 57.1 gives the full set of parameters used here and asks you to replicate the figure.

57.6.2. Application 2: A Permanent Income Model with Retirement#

In the previous application, we generated income dynamics with an inverted U shape using polynomials and placed them in an LQ framework.

It is arguably the case that this income process still contains unrealistic features.

A more common earning profile is where

income grows over working life, fluctuating around an increasing trend, with growth flattening off in later years

retirement follows, with lower but relatively stable (non-financial) income

Letting

Here

We suppose that preferences are unchanged and given by (57.16).

The budget constraint is also unchanged and given by

Our aim is to solve this problem and simulate paths using the LQ techniques described in this lecture.

In fact, this is a nontrivial problem, as the kink in the dynamics (57.28) at

However, we can still use our LQ methods here by suitably linking two-component LQ problems.

These two LQ problems describe the consumer’s behavior during her working life (lq_working) and retirement (lq_retired).

(This is possible because, in the two separate periods of life, the respective income processes [polynomial trend and constant] each fit the LQ framework.)

The basic idea is that although the whole problem is not a single time-invariant LQ problem, it is still a dynamic programming problem, and hence we can use appropriate Bellman equations at every stage.

Based on this logic, we can

solve

lq_retiredby the usual backward induction procedure, iterating back to the start of retirement.take the start-of-retirement value function generated by this process, and use it as the terminal condition

lq_workingspecification.solve

lq_workingby backward induction from this choice of

This process gives the entire life-time sequence of value functions and optimal policies.

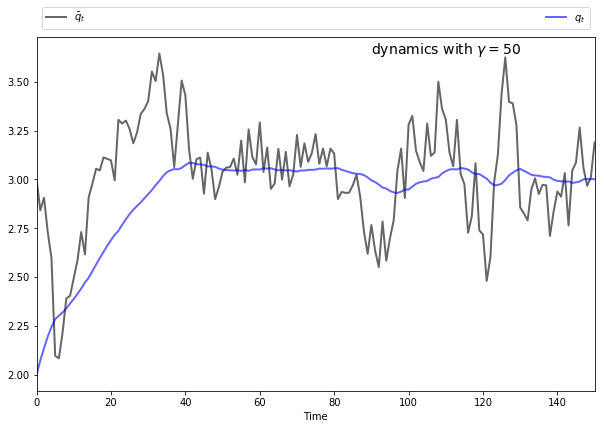

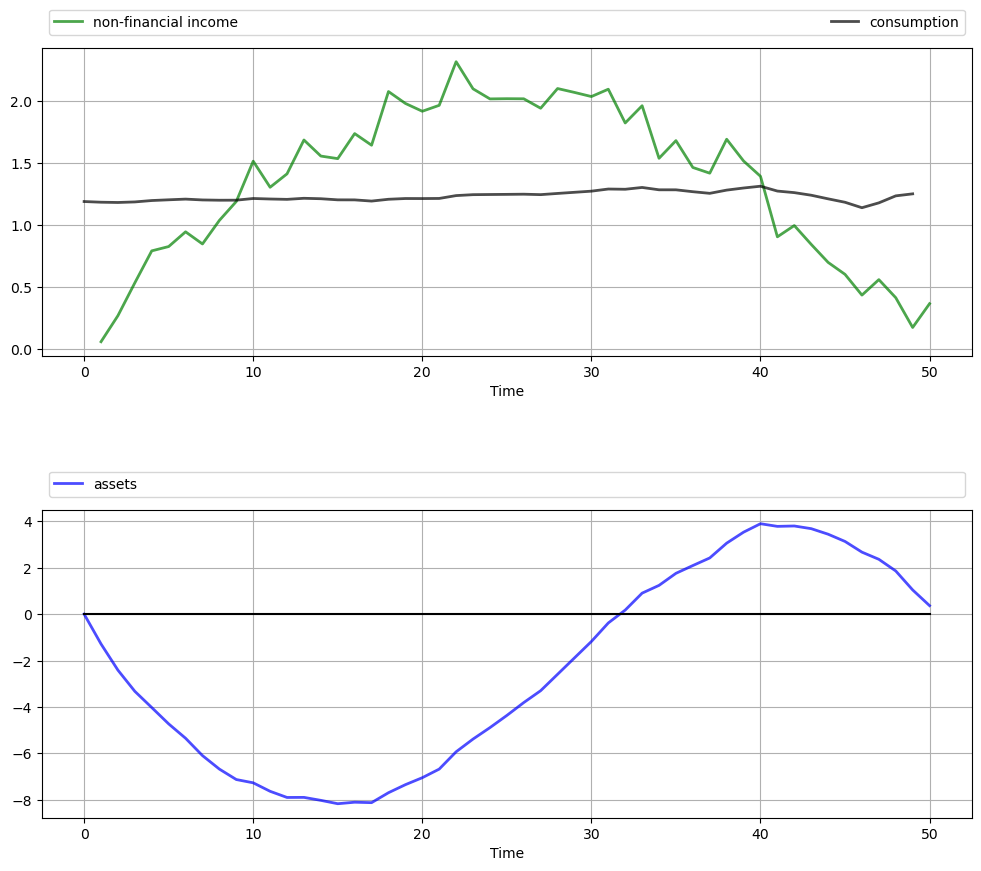

The next figure shows one simulation based on this procedure.

The full set of parameters used in the simulation is discussed in Exercise 57.2, where you are asked to replicate the figure.

Once again, the dominant feature observable in the simulation is consumption smoothing.

The asset path fits well with standard life cycle theory, with dissaving early in life followed by later saving.

Assets peak at retirement and subsequently decline.

57.6.3. Application 3: Monopoly with Adjustment Costs#

Consider a monopolist facing stochastic inverse demand function

Here

where

The monopolist maximizes the expected discounted sum of present and future profits

Here

This can be formulated as an LQ problem and then solved and simulated, but first let’s study the problem and try to get some intuition.

One way to start thinking about the problem is to consider what would happen

if

Without adjustment costs there is no intertemporal trade-off, so the monopolist will choose output to maximize current profit in each period.

It’s not difficult to show that profit-maximizing output is

In light of this discussion, what we might expect for general

if

if

This intuition turns out to be correct.

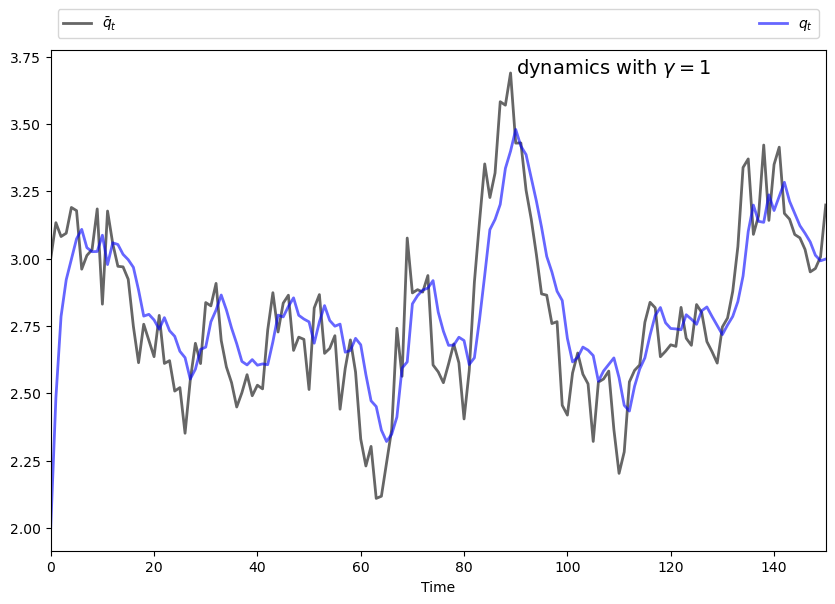

The following figures show simulations produced by solving the corresponding LQ problem.

The only difference in parameters across the figures is the size of

To produce these figures we converted the monopolist problem into an LQ problem.

The key to this conversion is to choose the right state — which can be a bit of an art.

Here we take

We also manipulated the profit function slightly.

In (57.29), current profits are

Let’s now replace

This makes no difference to the solution, since

(In fact, we are just adding a constant term to (57.29), and optimizers are not affected by constant terms.)

The reason for making this substitution is that, as you will be able to

verify,

After negation to convert to a minimization problem, the objective becomes

It’s now relatively straightforward to find

Furthermore, the matrices

Exercise 57.3 asks you to complete this process, and reproduce the preceding figures.

57.7. Exercises#

Exercise 57.1

Replicate the figure with polynomial income shown above.

The parameters are

Solution to Exercise 57.1

Here’s one solution.

We use some fancy plot commands to get a certain style — feel free to use simpler ones.

The model is an LQ permanent income / life-cycle model with hump-shaped income

where

# Model parameters

r = 0.05

β = 1/(1 + r)

T = 50

c_bar = 1.5

σ = 0.15

μ = 2

q = 1e4

m1 = T * (μ/(T/2)**2)

m2 = -(μ/(T/2)**2)

# Formulate as an LQ problem

Q = 1

R = np.zeros((4, 4))

Rf = np.zeros((4, 4))

Rf[0, 0] = q

A = [[1 + r, -c_bar, m1, m2],

[0, 1, 0, 0],

[0, 1, 1, 0],

[0, 1, 2, 1]]

B = [[-1],

[ 0],

[ 0],

[ 0]]

C = [[σ],

[0],

[0],

[0]]

# Compute solutions and simulate

lq = LQ(Q, R, A, B, C, beta=β, T=T, Rf=Rf)

x0 = (0, 1, 0, 0)

xp, up, wp = lq.compute_sequence(x0)

# Convert results back to assets, consumption and income

ap = xp[0, :] # Assets

c = up.flatten() + c_bar # Consumption

time = np.arange(1, T+1)

income = σ * wp[0, 1:] + m1 * time + m2 * time**2 # Income

# Plot results

n_rows = 2

fig, axes = plt.subplots(n_rows, 1, figsize=(12, 10))

plt.subplots_adjust(hspace=0.5)

bbox = (0., 1.02, 1., .102)

legend_args = {'bbox_to_anchor': bbox, 'loc': 3, 'mode': 'expand'}

p_args = {'lw': 2, 'alpha': 0.7}

axes[0].plot(range(1, T+1), income, 'g-', label="non-financial income",

**p_args)

axes[0].plot(range(T), c, 'k-', label="consumption", **p_args)

axes[1].plot(range(T+1), ap.flatten(), 'b-', label="assets", **p_args)

axes[1].plot(range(T+1), np.zeros(T+1), 'k-')

for ax in axes:

ax.grid()

ax.set_xlabel('Time')

ax.legend(ncol=2, **legend_args)

plt.show()

Exercise 57.2

Replicate the figure on work and retirement shown above.

The parameters are

To understand the overall procedure, carefully read the section containing that figure.

Hint

First, in order to make our approach work, we must ensure that both LQ problems have the same state variables and control.

As with previous applications, the control can be set to

For lq_working,

Recall that

For lq_retired, use the same definition of

For lq_retired, set preferences as in (57.27).

For lq_working, preferences are the same, except that lq_retired

back to the start of retirement.

With some careful footwork, the simulation can be generated by patching together the simulations from these two separate models.

Solution to Exercise 57.2

This is a permanent income / life-cycle model with polynomial growth in income over working life followed by a fixed retirement income.

The model is solved by combining two LQ programming problems as described in the lecture.

# Model parameters

r = 0.05

β = 1/(1 + r)

T = 60

K = 40

c_bar = 4

σ = 0.35

μ = 4

q = 1e4

s = 1

m1 = 2 * μ/K

m2 = -μ/K**2

# Formulate LQ problem 1 (retirement)

Q = 1

R = np.zeros((4, 4))

Rf = np.zeros((4, 4))

Rf[0, 0] = q

A = [[1 + r, s - c_bar, 0, 0],

[0, 1, 0, 0],

[0, 1, 1, 0],

[0, 1, 2, 1]]

B = [[-1],

[ 0],

[ 0],

[ 0]]

C = [[0],

[0],

[0],

[0]]

# Initialize LQ instance for retired agent

lq_retired = LQ(Q, R, A, B, C, beta=β, T=T-K, Rf=Rf)

# Iterate back to start of retirement, record final value function

for i in range(T-K):

lq_retired.update_values()

Rf2 = lq_retired.P

# Formulate LQ problem 2 (working life)

R = np.zeros((4, 4))

A = [[1 + r, -c_bar, m1, m2],

[0, 1, 0, 0],

[0, 1, 1, 0],

[0, 1, 2, 1]]

B = [[-1],

[ 0],

[ 0],

[ 0]]

C = [[σ],

[0],

[0],

[0]]

# Set up working life LQ instance with terminal Rf from lq_retired

lq_working = LQ(Q, R, A, B, C, beta=β, T=K, Rf=Rf2)

# Simulate working state / control paths

x0 = (0, 1, 0, 0)

xp_w, up_w, wp_w = lq_working.compute_sequence(x0)

# Simulate retirement paths (note the initial condition)

xp_r, up_r, wp_r = lq_retired.compute_sequence(xp_w[:, K])

# Convert results back to assets, consumption and income

xp = np.column_stack((xp_w, xp_r[:, 1:]))

assets = xp[0, :] # Assets

up = np.column_stack((up_w, up_r))

c = up.flatten() + c_bar # Consumption

time = np.arange(1, K+1)

income_w = σ * wp_w[0, 1:K+1] + m1 * time + m2 * time**2 # Income

income_r = np.full(T-K, s)

income = np.concatenate((income_w, income_r))

# Plot results

n_rows = 2

fig, axes = plt.subplots(n_rows, 1, figsize=(12, 10))

plt.subplots_adjust(hspace=0.5)

bbox = (0., 1.02, 1., .102)

legend_args = {'bbox_to_anchor': bbox, 'loc': 3, 'mode': 'expand'}

p_args = {'lw': 2, 'alpha': 0.7}

axes[0].plot(range(1, T+1), income, 'g-', label="non-financial income",

**p_args)

axes[0].plot(range(T), c, 'k-', label="consumption", **p_args)

axes[1].plot(range(T+1), assets, 'b-', label="assets", **p_args)

axes[1].plot(range(T+1), np.zeros(T+1), 'k-')

for ax in axes:

ax.grid()

ax.set_xlabel('Time')

ax.legend(ncol=2, **legend_args)

plt.show()

Exercise 57.3

Reproduce the figures from the monopolist application given above.

For parameters, use

Solution to Exercise 57.3

The first task is to find the matrices

Recall that

Letting

By our definition of

Using these facts you should be able to build the correct

Suitable

Our solution code is

# Model parameters

a0 = 5

a1 = 0.5

σ = 0.15

ρ = 0.9

γ = 1

β = 0.95

c = 2

T = 120

# Useful constants

m0 = (a0-c)/(2 * a1)

m1 = 1/(2 * a1)

# Formulate LQ problem

Q = γ

R = [[ a1, -a1, 0],

[-a1, a1, 0],

[ 0, 0, 0]]

A = [[ρ, 0, m0 * (1 - ρ)],

[0, 1, 0],

[0, 0, 1]]

B = [[0],

[1],

[0]]

C = [[m1 * σ],

[ 0],

[ 0]]

lq = LQ(Q, R, A, B, C=C, beta=β)

# Simulate state / control paths

x0 = (m0, 2, 1)

xp, up, wp = lq.compute_sequence(x0, ts_length=150)

q_bar = xp[0, :]

q = xp[1, :]

# Plot simulation results

fig, ax = plt.subplots(figsize=(10, 6.5))

# Some fancy plotting stuff -- simplify if you prefer

bbox = (0., 1.01, 1., .101)

legend_args = {'bbox_to_anchor': bbox, 'loc': 3, 'mode': 'expand'}

p_args = {'lw': 2, 'alpha': 0.6}

time = range(len(q))

ax.set(xlabel='Time', xlim=(0, max(time)))

ax.plot(time, q_bar, 'k-', lw=2, alpha=0.6, label=r'$\bar q_t$')

ax.plot(time, q, 'b-', lw=2, alpha=0.6, label='$q_t$')

ax.legend(ncol=2, **legend_args)

s = f'dynamics with $\gamma = {γ}$'

ax.text(max(time) * 0.6, 1 * q_bar.max(), s, fontsize=14)

plt.show()

<>:52: SyntaxWarning: invalid escape sequence '\g'

<>:52: SyntaxWarning: invalid escape sequence '\g'

/tmp/ipykernel_31787/4055912929.py:52: SyntaxWarning: invalid escape sequence '\g'

s = f'dynamics with $\gamma = {γ}$'